Dolphin research has come a long way from the days of underwater film cameras and limited shot counts. Today, technology plays a pivotal role in enhancing our understanding of these animals, from advanced sound processing to high-definition video and innovative underwater communication systems.

From the Sky

Drones are revolutionizing marine mammal research by providing a bird’s-eye view of habitats without disturbing the animals. They help researchers track movements, observe behaviors, and collect data from hard-to-reach areas. This technology allows for safer, more efficient studies, ultimately improving our understanding and conservation of marine mammals.

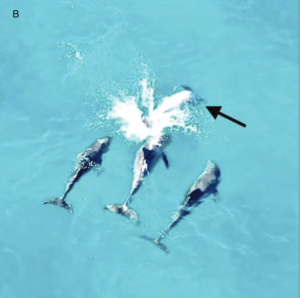

Last year, WDP published a scientific study that explores the occurrence of mixed-species group of dolphins, specifically the common bottlenose dolphin and the Atlantic spotted dolphin, along the southeast coast of Florida. While these species have been seen interacting in other regions, this study is the first to document them together in Florida. Using a DJI Mavic Pro 2 drone, we observed their behavior and highlight the need for further investigation into the reasons behind these mixed groups, particularly in Florida.

Read the study here.

Aerial view of dolphin behavior captured via drone.

We also used drones to record the release of Lamda, after his rescue, rehabilitation and release.

Watch here.

Read the full story here.

Photo of Lamda.

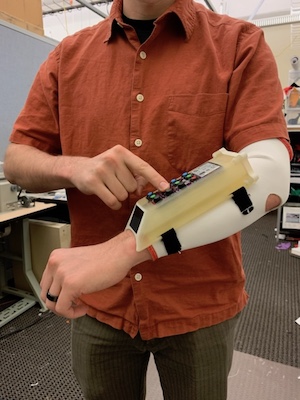

Navigating Dolphin Sounds with ASPOD

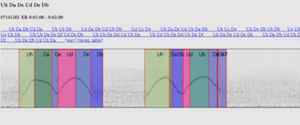

One of the major challenges in dolphin communication research is identifying which dolphin produces specific sounds. On land, researchers use triangulation methods with multiple microphones. Underwater, we employ similar techniques using hydrophones. Our ASPOD (Acoustic Source Positioning Overlay Device) combines a video camera with hydrophones to collect data on vocalizing dolphins. After processing, we can visualize which dolphin made which sound, aiding our understanding of their communication patterns.

Harnessing Machine Learning for Language Decoding

As artificial intelligence has gained traction, we have integrated machine learning into our research. These algorithms help us analyze our extensive sound datasets, allowing us to categorize sounds that were previously difficult to decipher. Using a user interface called UHURA, we can inject sound files and search for patterns in dolphin communication, correlating vocalizations with behaviors captured on video.